|

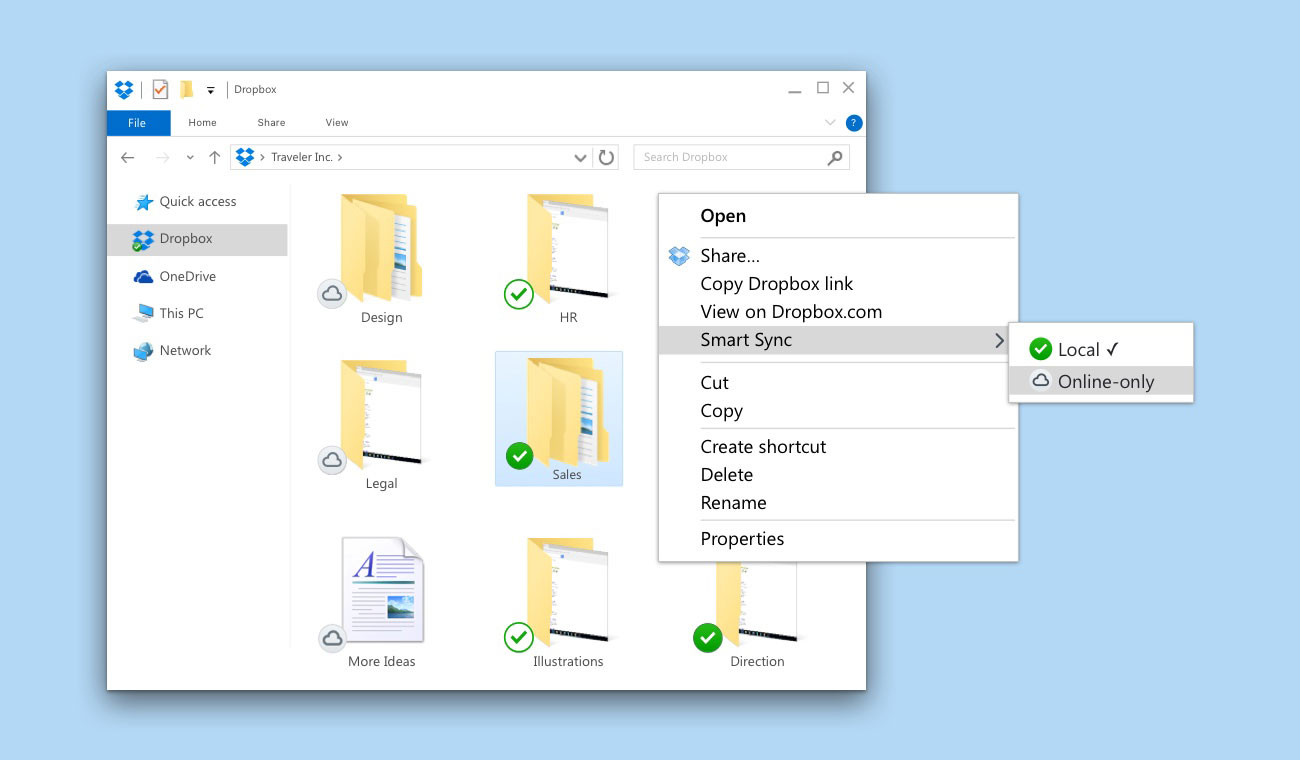

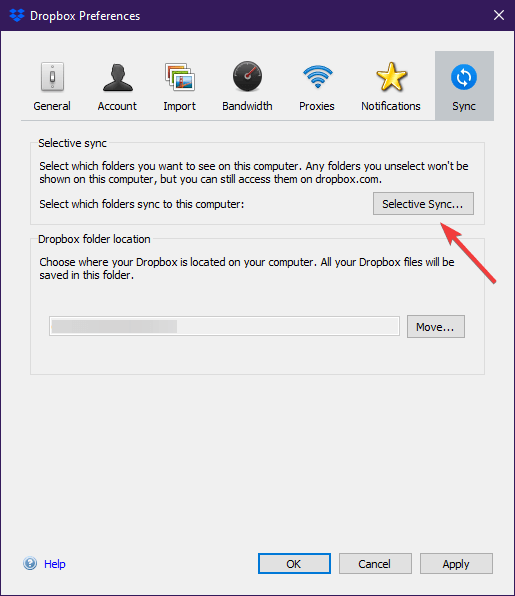

Open the settings, navigate to Preferences then Sync. Select selective sync and put each file in the sync folder. Here, unselect any unnecessary files.A Datahoarder can be a person that own just few GB/TB, or a person with PB of data. Dropbox and OneDrive have similar file syncing capabilities, using the model that Dropbox made popular - a sync folder on the user’s computer that syncs with the cloud. Both providers offer a selective sync functionality that gives users the choice of which folders to sync to their hard drive and which to only sync to the cloud.Mac.

Selective Sync Dropbox Archive Your FootageOver the past four years, we've been working hard on rebuilding our desktop client's sync engine from scratch. I had a persistent sync issues with Dropbox for several weeks it was running continuously and revving up the CPU on my Mac (as shown in Activity Monitor).It doesn't matter if you are more interested on mac backup, downloading video/website/etc., cloud backup, dvd/bluray ripping, scanning books/magazine/notes/papers, archive your footage/security recording/etc., hoarding tools and mac apps, etc.You should use a MacPro, MacBook Pro, iMac Pro or iMac, MacMini, iPad or iPad Pro, iPod Touch or iPhone, MacBook Air, MacBook or other Mac / Hackintosh.Never used such devices? Check r-RoadToPetabyteHelp us to save the Internet on Web ArchiveKeep your accounts safe with r-cloudinactivity and r-facebookdisabledmePlease keep in mind before posting: your posts are shared automatically on other websites tooCheck m/datahoarding to follow some Datahoarding subreddits at once or m/macos for MacOS or m/browsers for browsers.M/RoadtoPetabyte-AppleStyle for all our Subreddits, m/roadtopetabyte for all our DataHoarding Subreddits or m/AppleStyle for our MacOS Subreddits.Free Cloud Storage Solutions: check Subreddit description on the rightKeep in mind that Perma.cc sometimes turn off the preview, so you need to click screenshot or access the live website. More infos hereFile system formats in MacOS: please keep in mind that even if Journaled is not compatible with High Sierra, doesn't mean you can't use Journaled on external drives.

Dropbox servers store files durably and securely, and these files are accessible anywhere with an Internet connection.Syncing files becomes much harder at scale, and understanding why is important for understanding why we decided to rewrite. The user installs the Dropbox app, which creates a magic folder on their computer, and putting files in that folder syncs them to Dropbox servers and their other devices. At first glance, a lot of Dropbox sync looks the same as today. In particular, we’re going to share reflections on how to think about a major software rewrite and highlight the key initiatives that made this project a success, like having a very clean data model.To begin, let's rewind the clock to 2008, the year Dropbox sync first entered beta. It turned out that this was an excellent idea for Dropbox but only because we were very thoughtful about how we went about this process. We're proud to announce today that we've shipped this new sync engine (codenamed "Nucleus") to all Dropbox users.Rewriting the sync engine was really hard, and we don’t want to blindly celebrate it, because in many environments it would have been a terrible idea. Bidirectional sync has many corner cases, and durability is harder than just making sure we don’t delete or corrupt data on the server. Network partitions are anomalous conditions for many distributed systems algorithms, yet they are standard operation for us.Getting this right is important: Users trust Dropbox with their most precious content, and keeping it safe is non-negotiable. But putting raw scale aside, file synchronization is a unique distributed systems problem, because clients are allowed to go offline for long periods of time and reconcile their changes when they return. Distributed systems are hardThe scale of Dropbox alone is a hard systems engineering challenge. Under the operating system, there's enormous variation in hardware, and users also install different kernel extensions or drivers that change the behavior within the operating system. We support Windows, macOS, and Linux, and each of these platforms has a variety of filesystems, all with slightly different behavior. Durability everywhere is hardDropbox also aims to “just work” on users’ computers, no matter their configuration. Then, the user would see the file missing on the server and their other devices, even though they only moved it locally. Consider the case where, due to a transient network hiccup, a delete goes through but its corresponding add does not. A shared folder may have thousands of members, each with a sync engine with varied connectivity and a differently out-of-date view of the Dropbox filesystem. So, catching regressions with automated testing before they hit production is critical at scale.However, testing sync engines well is difficult since the number of possible combinations of file states and user actions is astronomical. Debugging issues in production is much more expensive than finding them in development, especially for software that runs on users’ devices. Testing file sync is hardWith a sufficiently large user base, just about anything that’s theoretically possible will happen in production. So at scale, “just working” across many environments and providing strong durability guarantees are fundamentally opposed. These issues often only show up in large populations, since a rare filesystem bug may only affect a very small fraction of users.

Sync Engine Classic had years of production hardening, and we had spent time hunting down and fixing even the rarest bugs.Joel Spolsky called rewriting code from scratch the " single worst strategic mistake that any software company can make." Successfully pulling off a rewrite often requires slowing feature development, since progress made on the old system needs to be ported over to the new one. We had hundreds of millions of users, new product features like Smart Sync on the way, and a strong team of sync experts. Back in 2016, it looked like we had solved that problem pretty well. For example, consider the case where we have three folders with one nested inside another.Okay, so syncing files at scale is hard. The team would have to drop everything, diagnose the issue, fix it, and then spend time getting their apps back into a good state. However, these incremental improvements weren’t enough.Shipping any change to sync behavior required an arduous rollout, and we'd still find complex inconsistencies in production. We'd added copious telemetry and built processes for ensuring maintenance was safe and easy. In the course of building Smart Sync, we'd made many incremental improvements to the system, cleaning up ugly code, refactoring interfaces, and even adding Python type annotations. There were few consistency guarantees, and we'd spend hours debugging issues where something theoretically possible but "extremely unlikely" would show up in production. The data model was designed for a simpler world without sharing, and files lacked a stable identifier that would be preserved across moves. Finally, we poured time into incremental performance wins but failed to appreciably scale the total number of files the sync engine could manage.There were a few root causes for these issues, but the most important one was Sync Engine Classic's data model. Free playrix games for macHaving a strong data model with tight invariants is immensely valuable for testing, since it's always easy to check if your system is in a valid state.We discussed above how sync is a very concurrent problem, and testing and debugging concurrent code is notoriously difficult. Sync Engine Classic’s permissive data model meant we couldn't check much in stress tests, since there were large sets of undesirable yet still legal outcomes we couldn't assert against. We relied on slow rollouts and debugging issues in the field rather than automated pre-release testing. But the core primitives of the system remained the same, as refactoring alone cannot change the fundamental data model.□ Have you tried improving performance by optimizing hotspots?Software often spends most of its time in very little of the code. Python's dynamism can make this difficult, so we added MyPy annotations as we went to gradually catch more bugs at compile time. Renaming variables and untangling intertwined modules can all be done incrementally, and we spent a lot of time doing this with Sync Engine Classic. Have you exhausted incremental improvements?□ Have you tried refactoring code into better modules?Poor code quality alone isn't a great reason to rewrite a system. This architecture sacrificed the benefits of parallelism in order to make the system easier to reason about.Let’s distill the reasons for our decision to rewrite into a “rewrite checklist” that can help navigate this kind of decision for other systems. In practice, we ended up using very coarse-grained locks held for long periods of time. But improvements to our memory footprint, like increasing the number of files the system could manage, remained elusive. We had a team working on performance and scale for months, and they had great results for improving file content transfer performance.

0 Comments

Leave a Reply. |

AuthorJeremy ArchivesCategories |

RSS Feed

RSS Feed